-

Notifications

You must be signed in to change notification settings - Fork 316

Description

Goal

Simplify deployment requirements by reducing the need to select the best eviction policy, which is workload dependent and can change over the lifetime of an application. Instead, use simple algorithmic techniques to dynamically reconfigure the cache for the current workload. This allows the cache to robustly make near optimal eviction choices in a wider range of workload patterns.

Context

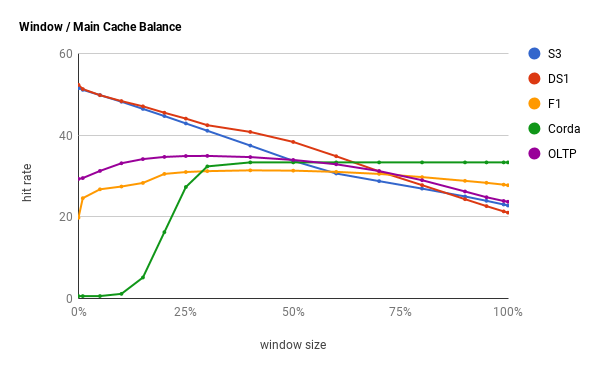

The TinyLFU implementation uses a static configuration between the tiny / main regions. Per the paper, this is defaulted to 1% / 99% to favor frequency-biased workloads, such as databases and search engines. However, some workloads are highly skewed towards recency such as blockchain mining and social networks. See for example Twitter's data sets where LRU is near optimal. In such cases the frequency filter can degrade the hit ratio, as shown below.

The implementation states that this static parameter does not need to be tuned. While some users might realize their workload bias and choose a different eviction policy, ideally the algorithm is intelligent to discover the optimal setting. In the Caffeine library, this is done by using hill climbing (short article, paper).

Suggested Approach

Use simple hill climbing to guess at a new configuration, sample the hit rate, calculate a new step size based on if the change was an improvement, adjust, and repeat. In Caffeine the initial step size is 6.25% and it decays at a rate of 0.98 so that the policy converges (rather than oscillates around) the best configuration. This process restarts if the hit rate changes by 5% or more. The sample period should be large enough to avoid a noisy hit rate and can piggyback on the reset interval for decaying the access frequency counters. As shown below, this approach can handle highly skewed workloads that change with the environment.

Success Metrics

- Achieve a high hit ratio in LRU-biased workloads

- Achieve a high hit ratio in LFU / MRU biased workloads, like databases and search engines.

- Dynamically reconfigure when the workload pattern changes, throughout the lifetime of the cache, to optimize for the new environment.

Additional Suggestions

- By default, the CountMinSketch uses uint32 counters. This can be reduced to 4-bit counters without degrading the hit rate, as the goal is to compare entries to determine if one is hotter than the other. That can be further reduced by incorporating the doorkeeper mechanism, a bloom filter, so that fewer counters are needed.

- The concurrency model requires locking to update the eviction policy. If embedded as a local cache this might observe contention, hence a tryLock is used. A ring buffer to sample the access history is a more efficient way to this as it reduces the CASes required (short article).

- The item's allocation size can be leveraged by the admission policy to improve the hit rate (paper).

- Incorporate jitter if the policy is subject to hash flooding attacks.

- Simulate workloads against the Java implementation to validate that the policy achieves a similar hit rate.