diff --git a/.github/ISSUE_TEMPLATE/bug_report.md b/.github/ISSUE_TEMPLATE/bug_report.md

new file mode 100644

index 000000000..dd84ea782

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/bug_report.md

@@ -0,0 +1,38 @@

+---

+name: Bug report

+about: Create a report to help us improve

+title: ''

+labels: ''

+assignees: ''

+

+---

+

+**Describe the bug**

+A clear and concise description of what the bug is.

+

+**To Reproduce**

+Steps to reproduce the behavior:

+1. Go to '...'

+2. Click on '....'

+3. Scroll down to '....'

+4. See error

+

+**Expected behavior**

+A clear and concise description of what you expected to happen.

+

+**Screenshots**

+If applicable, add screenshots to help explain your problem.

+

+**Desktop (please complete the following information):**

+ - OS: [e.g. iOS]

+ - Browser [e.g. chrome, safari]

+ - Version [e.g. 22]

+

+**Smartphone (please complete the following information):**

+ - Device: [e.g. iPhone6]

+ - OS: [e.g. iOS8.1]

+ - Browser [e.g. stock browser, safari]

+ - Version [e.g. 22]

+

+**Additional context**

+Add any other context about the problem here.

diff --git a/.github/ISSUE_TEMPLATE/custom.md b/.github/ISSUE_TEMPLATE/custom.md

new file mode 100644

index 000000000..b894315f4

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/custom.md

@@ -0,0 +1,8 @@

+---

+name: Custom issue template

+about: Describe this issue template's purpose here.

+title: ''

+labels: ''

+assignees: ''

+

+---

diff --git a/.github/ISSUE_TEMPLATE/feature_request.md b/.github/ISSUE_TEMPLATE/feature_request.md

new file mode 100644

index 000000000..bbcbbe7d6

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/feature_request.md

@@ -0,0 +1,20 @@

+---

+name: Feature request

+about: Suggest an idea for this project

+title: ''

+labels: ''

+assignees: ''

+

+---

+

+**Is your feature request related to a problem? Please describe.**

+A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

+

+**Describe the solution you'd like**

+A clear and concise description of what you want to happen.

+

+**Describe alternatives you've considered**

+A clear and concise description of any alternative solutions or features you've considered.

+

+**Additional context**

+Add any other context or screenshots about the feature request here.

diff --git a/.github/pull_request_template.md b/.github/pull_request_template.md

new file mode 100644

index 000000000..557ee035d

--- /dev/null

+++ b/.github/pull_request_template.md

@@ -0,0 +1,44 @@

+# Pull Request Description

+

+# Jira Ticket Link

+

+## Description

+

+Please include a summary of the change and which issue is fixed. Please also include relevant motivation and context. List any dependencies that are required for this change.

+

+Fixes # (issue)

+

+## Type of change

+

+Please delete options that are not relevant.

+

+- [ ] Bug fix (non-breaking change which fixes an issue)

+- [ ] New feature (non-breaking change which adds functionality)

+- [ ] Breaking change (fix or feature that would cause existing functionality to not work as expected)

+- [ ] This change requires a documentation update

+

+## How Has This Been Tested?

+

+Please describe the tests that you ran to verify your changes. Provide instructions so we can reproduce. Please also list any relevant details for your test configuration

+

+- [ ] Test A

+- [ ] Test B

+

+**Test Configuration**:

+* Firmware version:

+* Hardware:

+* Toolchain:

+* SDK:

+* API:

+

+## Checklist:

+

+- [ ] My code follows the style guidelines of this project

+- [ ] I have performed a self-review of my own code

+- [ ] I have commented my code, particularly in hard-to-understand areas

+- [ ] I have made corresponding changes to the documentation

+- [ ] My changes generate no new warnings

+- [ ] I have added tests that prove my fix is effective or that my feature works

+- [ ] New and existing unit tests pass locally with my changes

+- [ ] Any dependent changes have been merged and published in downstream modules

+- [ ] I have checked my code and corrected any misspellings

diff --git a/.github/workflows/python-app.yml b/.github/workflows/python-app.yml

new file mode 100644

index 000000000..77d88ee40

--- /dev/null

+++ b/.github/workflows/python-app.yml

@@ -0,0 +1,31 @@

+# This workflow will install Python dependencies, run tests and lint with a single version of Python

+# For more information see: https://docs.github.com/en/actions/automating-builds-and-tests/building-and-testing-python

+

+name: Python application

+

+on:

+ push:

+ branches: [ "main" ]

+ pull_request:

+ branches: [ "main" ]

+

+permissions:

+ contents: read

+

+jobs:

+ build:

+

+ runs-on: ubuntu-latest

+

+ steps:

+ - uses: actions/checkout@v3

+ - name: Set up Python 3.10

+ uses: actions/setup-python@v3

+ with:

+ python-version: "3.10"

+ - name: Install dependencies

+ run: |

+ cd oneAPI_ODAV_APP

+ python -m pip install --upgrade pip

+ pip install -r requirements.txt

+ pip install -r requirements_gpu.txt

\ No newline at end of file

diff --git a/.gitignore b/.gitignore

new file mode 100644

index 000000000..b2e412853

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,265 @@

+/oneAPI_ODAV_APP/Model/yolov7.pt

+/oneAPI_ODAV_APP/Model/yolov7-e6e.pt

+

+*.jpg

+*.jpeg

+*.png

+*.bmp

+*.tif

+*.tiff

+*.heic

+*.JPG

+*.JPEG

+*.PNG

+*.BMP

+*.TIF

+*.TIFF

+*.HEIC

+*.mp4

+*.mov

+*.MOV

+*.avi

+*.data

+*.json

+*.cfg

+!setup.cfg

+!cfg/yolov3*.cfg

+

+storage.googleapis.com

+runs/*

+data/*

+data/images/*

+!data/*.yaml

+!data/hyps

+!data/scripts

+!data/images

+!data/images/zidane.jpg

+!data/images/bus.jpg

+!data/*.sh

+

+results*.csv

+

+# Datasets -------------------------------------------------------------------------------------------------------------

+coco/

+coco128/

+VOC/

+

+coco2017labels-segments.zip

+test2017.zip

+train2017.zip

+val2017.zip

+

+# MATLAB GitIgnore -----------------------------------------------------------------------------------------------------

+*.m~

+*.mat

+!targets*.mat

+

+# Neural Network weights -----------------------------------------------------------------------------------------------

+*.weights

+*.pt

+*.pb

+*.onnx

+*.engine

+*.mlmodel

+*.torchscript

+*.tflite

+*.h5

+*_saved_model/

+*_web_model/

+*_openvino_model/

+darknet53.conv.74

+yolov3-tiny.conv.15

+*.ptl

+*.trt

+

+# GitHub Python GitIgnore ----------------------------------------------------------------------------------------------

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+env/

+build/

+develop-eggs/

+dist/

+downloads/

+eggs/

+.eggs/

+lib/

+lib64/

+parts/

+sdist/

+var/

+wheels/

+*.egg-info/

+/wandb/

+.installed.cfg

+*.egg

+events.out.tfevents.*

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+.hypothesis/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+target/

+

+# Jupyter Notebook

+.ipynb_checkpoints

+

+# pyenv

+.python-version

+

+# celery beat schedule file

+celerybeat-schedule

+

+# SageMath parsed files

+*.sage.py

+

+# dotenv

+.env

+

+# virtualenv

+.venv*

+venv*/

+ENV*/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+

+

+# https://github.com/github/gitignore/blob/master/Global/macOS.gitignore -----------------------------------------------

+

+# General

+.DS_Store

+.AppleDouble

+.LSOverride

+

+# Icon must end with two \r

+Icon

+Icon?

+

+# Thumbnails

+._*

+

+# Files that might appear in the root of a volume

+.DocumentRevisions-V100

+.fseventsd

+.Spotlight-V100

+.TemporaryItems

+.Trashes

+.VolumeIcon.icns

+.com.apple.timemachine.donotpresent

+

+# Directories potentially created on remote AFP share

+.AppleDB

+.AppleDesktop

+Network Trash Folder

+Temporary Items

+.apdisk

+

+

+# https://github.com/github/gitignore/blob/master/Global/JetBrains.gitignore

+# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio and WebStorm

+# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

+

+# User-specific stuff:

+.idea/*

+.idea/**/workspace.xml

+.idea/**/tasks.xml

+.idea/dictionaries

+.html # Bokeh Plots

+.pg # TensorFlow Frozen Graphs

+.avi # videos

+

+# Sensitive or high-churn files:

+.idea/**/dataSources/

+.idea/**/dataSources.ids

+.idea/**/dataSources.local.xml

+.idea/**/sqlDataSources.xml

+.idea/**/dynamic.xml

+.idea/**/uiDesigner.xml

+

+# Gradle:

+.idea/**/gradle.xml

+.idea/**/libraries

+

+# CMake

+cmake-build-debug/

+cmake-build-release/

+

+# Mongo Explorer plugin:

+.idea/**/mongoSettings.xml

+

+## File-based project format:

+*.iws

+

+## Plugin-specific files:

+

+# IntelliJ

+out/

+

+# mpeltonen/sbt-idea plugin

+.idea_modules/

+

+# JIRA plugin

+atlassian-ide-plugin.xml

+

+# Cursive Clojure plugin

+.idea/replstate.xml

+

+# Crashlytics plugin (for Android Studio and IntelliJ)

+com_crashlytics_export_strings.xml

+crashlytics.properties

+crashlytics-build.properties

+fabric.properties

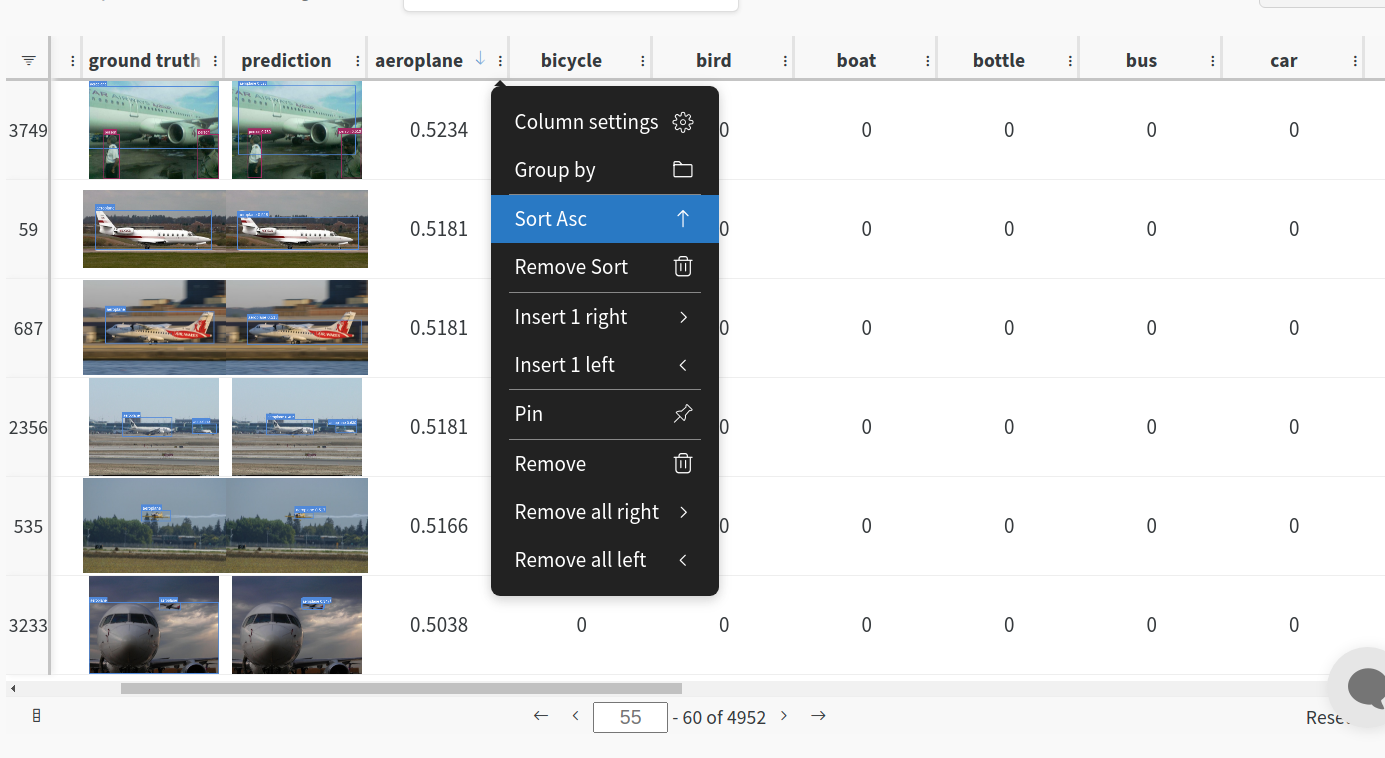

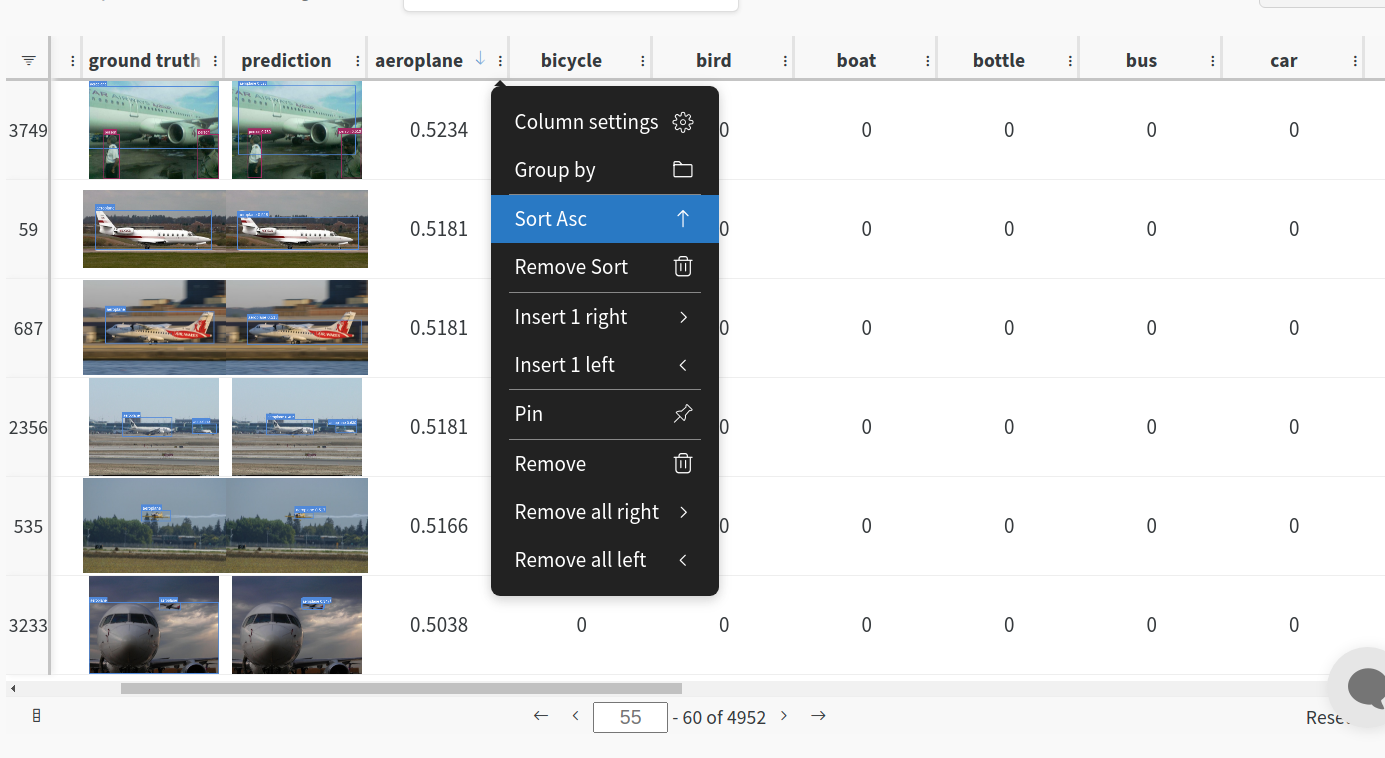

diff --git a/Data/Screenshots/01.png b/Data/Screenshots/01.png

new file mode 100644

index 000000000..5aab1b9d5

Binary files /dev/null and b/Data/Screenshots/01.png differ

diff --git a/Data/Screenshots/02.png b/Data/Screenshots/02.png

new file mode 100644

index 000000000..fed44b196

Binary files /dev/null and b/Data/Screenshots/02.png differ

diff --git a/Data/Screenshots/03.png b/Data/Screenshots/03.png

new file mode 100644

index 000000000..8e8486fde

Binary files /dev/null and b/Data/Screenshots/03.png differ

diff --git a/Data/Screenshots/04.png b/Data/Screenshots/04.png

new file mode 100644

index 000000000..91cfc9192

Binary files /dev/null and b/Data/Screenshots/04.png differ

diff --git a/Data/demo.gif b/Data/demo.gif

new file mode 100644

index 000000000..36e2adf38

Binary files /dev/null and b/Data/demo.gif differ

diff --git a/Data/demo_seg.gif b/Data/demo_seg.gif

new file mode 100644

index 000000000..9e018ff69

Binary files /dev/null and b/Data/demo_seg.gif differ

diff --git a/Data/hack2skill Intel OneAPI Hackathon.docx b/Data/hack2skill Intel OneAPI Hackathon.docx

new file mode 100644

index 000000000..285cb3cd8

Binary files /dev/null and b/Data/hack2skill Intel OneAPI Hackathon.docx differ

diff --git a/Data/oneAPI ODAV.drawio b/Data/oneAPI ODAV.drawio

new file mode 100644

index 000000000..8a4036e48

--- /dev/null

+++ b/Data/oneAPI ODAV.drawio

@@ -0,0 +1,232 @@

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

diff --git a/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pdf b/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pdf

new file mode 100644

index 000000000..cc34a39d7

Binary files /dev/null and b/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pdf differ

diff --git a/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pptx b/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pptx

new file mode 100644

index 000000000..b8c8a024c

Binary files /dev/null and b/Data/oneAPI_ODAV Hack2skill Final INTEL oneAPI Hackathon PPT.pptx differ

diff --git a/Data/oneAPI_ODAV.png b/Data/oneAPI_ODAV.png

new file mode 100644

index 000000000..b14444a48

Binary files /dev/null and b/Data/oneAPI_ODAV.png differ

diff --git a/Data/pytorchvsipex.png b/Data/pytorchvsipex.png

new file mode 100644

index 000000000..8d3f17830

Binary files /dev/null and b/Data/pytorchvsipex.png differ

diff --git a/LICENSE b/LICENSE

new file mode 100644

index 000000000..8ba114299

--- /dev/null

+++ b/LICENSE

@@ -0,0 +1,21 @@

+MIT License

+

+Copyright (c) 2023 Intelegix Labs

+

+Permission is hereby granted, free of charge, to any person obtaining a copy

+of this software and associated documentation files (the "Software"), to deal

+in the Software without restriction, including without limitation the rights

+to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

+copies of the Software, and to permit persons to whom the Software is

+furnished to do so, subject to the following conditions:

+

+The above copyright notice and this permission notice shall be included in all

+copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

+IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

+FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

+AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

+LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

+OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

+SOFTWARE.

diff --git a/README.md b/README.md

index 81463bfd7..856786046 100644

--- a/README.md

+++ b/README.md

@@ -1,17 +1,170 @@

-# intel-oneAPI

+oneAPI ODAV

-#### Team Name -

-#### Problem Statement -

-#### Team Leader Email -

+#### Team Name - Hitaya

+#### Problem Statement - Object Detection For Autonomous Vehicles (DEMO)

+#### Team Leader Email - manijb13@gmail.com

## A Brief of the Prototype:

- This section must include UML Daigrms and prototype description

+ This section includes UML Daigrms and prototype description

+ - OneAI_ODAV PPT here.

+

+

+  +

+

+

+

+  +

+  +

+

## Tech Stack:

List Down all technologies used to Build the prototype **Clearly mentioning Intel® AI Analytics Toolkits, it's libraries and the SYCL/DCP++ Libraries used**

+ - Intel® AI Analytics Toolkits

+ - Intel Distribution for Python

## Step-by-Step Code Execution Instructions:

- This Section must contain set of instructions required to clone and run the prototype, so that it can be tested and deeply analysed

+ This Section must contain set of instructions required to clone and run the prototype, so that it can be tested and deeply analysed

+ - Kindly scroll down and head over to the Project Architecture section were we've explained in detail steps to run

## What I Learned:

Write about the biggest learning you had while developing the prototype

+ - We've been able to custom label/annotate the objects in detection.

+ - Came up with novel algorithms for different kinds of object detection specific to autonomous cars.

+ - Using Intel® AI Analytics Toolkits we were able to enhance performance speed in training data.

+ - Our applications works under all kinds of weather conditions and provides proper analysis over the data.

+

+  +

+

+

+

+## Features

+- Car Dashboard Application, to detect objects after detection from, the yolov7 model.

+- We have used Tiny Yolov7 Model Architecture to ensure, the car dashcam requires very less, hardware configuration to run the application.

+- Custom Labelling tool, to self Label the Application.

+- Sending of data points once connected to the internet, like userid, detected_image, label, bounding_box_co-ordinate, latitude, and longitude through rest API.

+- Rest API saves the real-time data, in the database, and sends the data to Admin Web Interface.

+- Auto Train the custom-yolov7 model, with new data points every week, and update the car dashboard Application over the internet, to improve the accuracy of the model over time.

+

+- Install Python 3.10 and its required Packages like PyTorch etc.

+

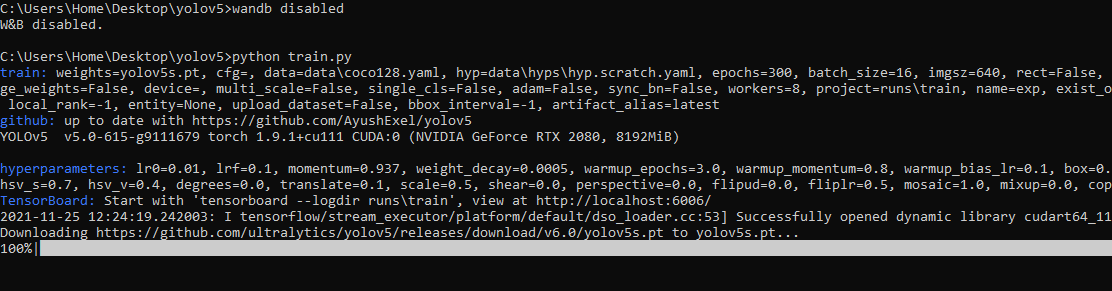

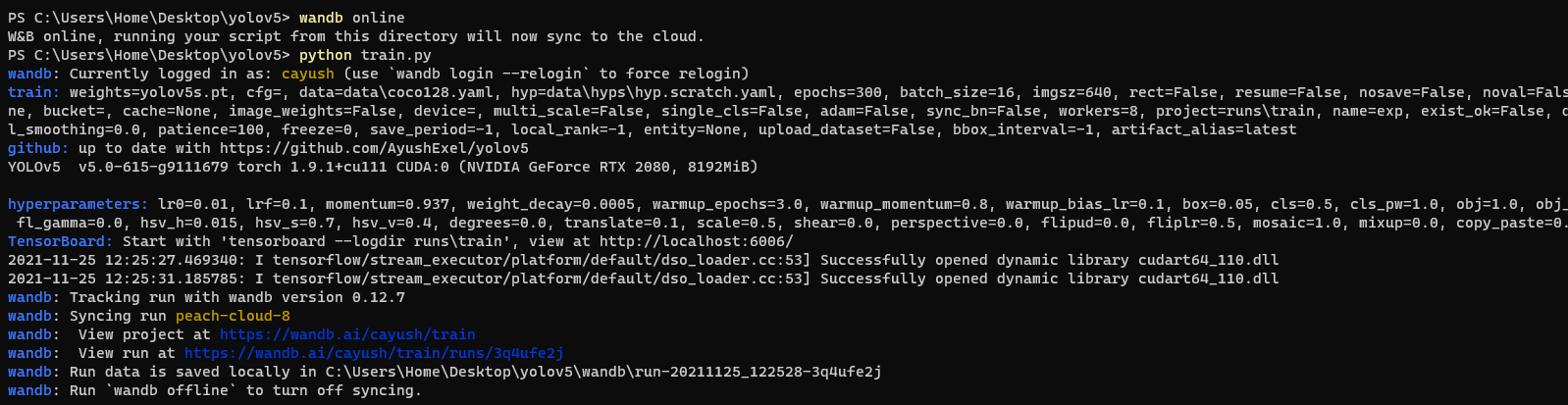

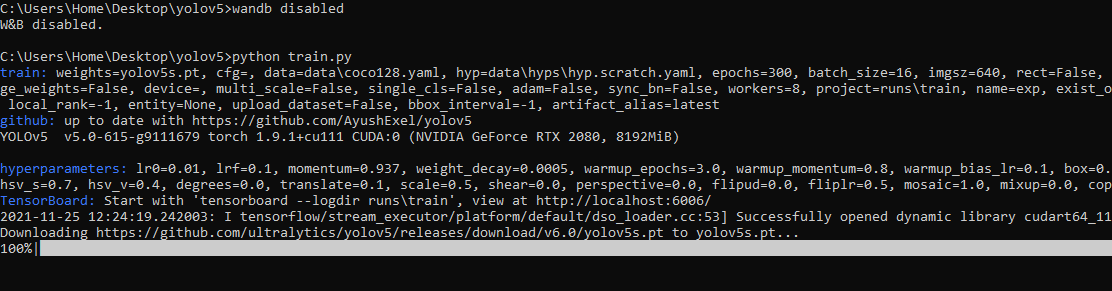

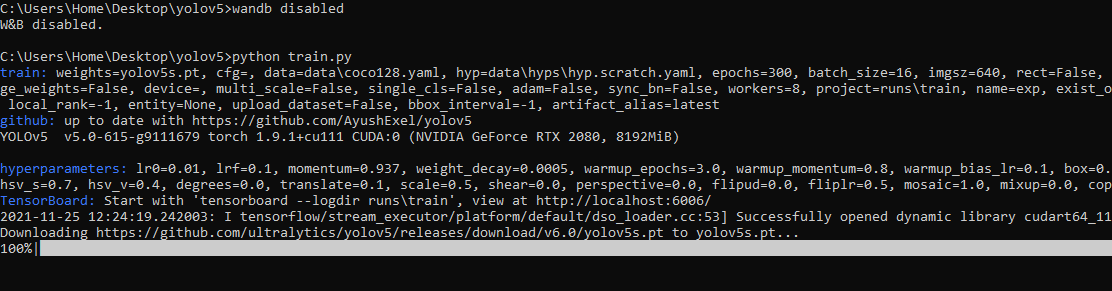

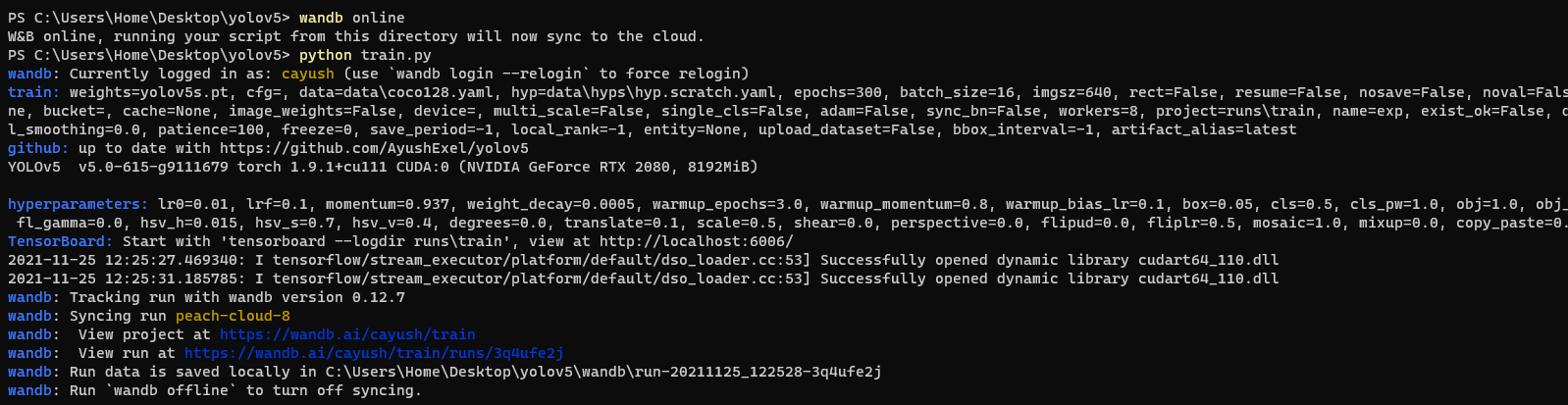

+## 2. Train the YoloV7 Object Detection Model

+

+#### Open Image Labelling Tool

+

+```commandline

+labelImg

+```

+

+#### Add more data from the already labelled images

+

+```

+git clone https://github.com/IntelegixLabs/smartathon-dataset

+cd smartathon-dataset

+Add train,val, and test data to oneAPI_ODAV/yolov7-custom/data files

+```

+

+#### Train the custom Yolov7 Model

+

+```commandline

+git clone https://github.com/IntelegixLabs/oneAPI_ODAV

+cd oneAPI_ODAV

+cd yolov7-custom

+pip install -r requirements.txt

+pip install -r requirements_gpu.txt

+pip3 install torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu117

+python train.py --workers 1 --device 0 --batch-size 8 --epochs 100 --img 640 640 --data data/custom_data.yaml --hyp data/hyp.scratch.custom.yaml --cfg cfg/training/yolov7-custom.yaml --name yolov7-custom --weights yolov7.pt

+

+```

+## 3. Getting Started With Car Dash Board Application

+

+- Clone the repo and cd into the directory

+```sh

+$ git clone https://github.com/IntelegixLabs/oneAPI_ODAV.git

+$ cd oneAPI_ODAV

+$ cd oneAPI_ODAV_App

+```

+- Download the Trained Models and Test_Video Folder from google Drive link given below and extract it inside oneAPI_ODAV_App Folder

+- https://drive.google.com/file/d/1EZAifBEQU9q8AgOkVzM_IqtC3tjQJrXA/view?usp=sharing

+

+```sh

+$ wget https://drive.google.com/file/d/1YXf8kMjowu28J5Z_ZPXoRIDABRKzmHis/view?usp=sharing

+```

+

+- Install Python 3.10 and its required Packages like PyTorch etc.

+

+```sh

+$ pip install -r requirements.txt

+$ pip intsall -r requirements_gpu.txt

+$ pip3 install torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu117

+```

+

+- Run the app

+

+```sh

+$ python home.py

+```

+

+

+#### Packaging the Application for Creating a Execulatle exe File that can run in Windows,Linus,or Mac OS

+

+You can pass any valid `pyinstaller` flag in the following command to further customize the way your app is built.

+for reference read the pyinstaller documentation here.

+

+```sh

+$ pyinstaller -i "favicon.ico" --onefile -w --hiddenimport=EasyTkinter --hiddenimport=Pillow --hiddenimport=opencv-python --hiddenimport=requests--hiddenimport=Configparser --hiddenimport=PyAutoGUI --hiddenimport=numpy --hiddenimport=pandas --hiddenimport=urllib3 --hiddenimport=tensorflow --hiddenimport=scikit-learn --hiddenimport=wget --hiddenimport=pygame --hiddenimport=dlib --hiddenimport=imutils --hiddenimport=deepface --hiddenimport=keras --hiddenimport=cvlib --name oneAPI_ODAV home.py

+```

+

+

+## 4. Working Samples

+

+- For Video Demostration refer to the YouTube link here.

+

+#### GUI INTERFACE SAMPLES

+

+

+  +

+  +

+

+

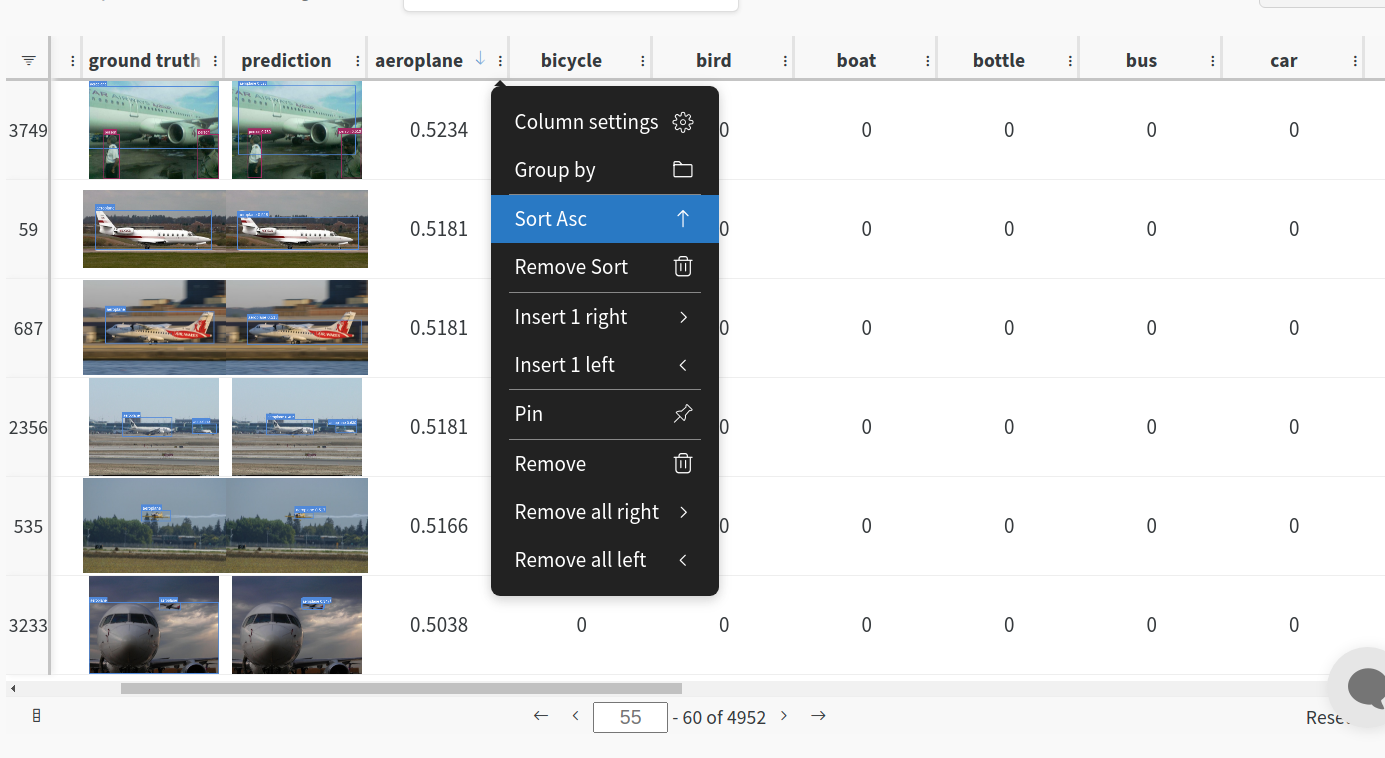

+#### THEME 1 (Detection and evaluation of the following elements on street imagery taken from a moving vehicle) :camera_flash:

+

+

+

+Object types:

+

+```

+ ● PERSON

+ ● BICYCLE

+ ● CAR

+ ● MOTORCYCLE

+ ● BUS

+ ● TRUCK

+ ● TRAFFIC LIGHT

+ ● STOP SIGN

+ ● PARKING METER

+ ● POTTED PLANT

+ ● CLOCK

+```

+

+

+  +

+

+

+

+#### Car Dashboard Custom Image Labelling Tool

+

+

+

+  +

+

+

+## 5. Running yolov7-Segmentation Model

+

+```sh

+$ cd oneAPI_ODAV

+$ cd seg/segment

+$ python predict.py

+```

+

+

+

diff --git a/ipex/cfg/training/yolov7-custom.yaml b/ipex/cfg/training/yolov7-custom.yaml

new file mode 100644

index 000000000..60a2e9eef

--- /dev/null

+++ b/ipex/cfg/training/yolov7-custom.yaml

@@ -0,0 +1,140 @@

+# parameters

+nc: 1 # number of classes

+depth_multiple: 1.0 # model depth multiple

+width_multiple: 1.0 # layer channel multiple

+

+# anchors

+anchors:

+ - [12,16, 19,36, 40,28] # P3/8

+ - [36,75, 76,55, 72,146] # P4/16

+ - [142,110, 192,243, 459,401] # P5/32

+

+# yolov7 backbone

+backbone:

+ # [from, number, module, args]

+ [[-1, 1, Conv, [32, 3, 1]], # 0

+

+ [-1, 1, Conv, [64, 3, 2]], # 1-P1/2

+ [-1, 1, Conv, [64, 3, 1]],

+

+ [-1, 1, Conv, [128, 3, 2]], # 3-P2/4

+ [-1, 1, Conv, [64, 1, 1]],

+ [-2, 1, Conv, [64, 1, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [[-1, -3, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [256, 1, 1]], # 11

+

+ [-1, 1, MP, []],

+ [-1, 1, Conv, [128, 1, 1]],

+ [-3, 1, Conv, [128, 1, 1]],

+ [-1, 1, Conv, [128, 3, 2]],

+ [[-1, -3], 1, Concat, [1]], # 16-P3/8

+ [-1, 1, Conv, [128, 1, 1]],

+ [-2, 1, Conv, [128, 1, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [[-1, -3, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [512, 1, 1]], # 24

+

+ [-1, 1, MP, []],

+ [-1, 1, Conv, [256, 1, 1]],

+ [-3, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [256, 3, 2]],

+ [[-1, -3], 1, Concat, [1]], # 29-P4/16

+ [-1, 1, Conv, [256, 1, 1]],

+ [-2, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [[-1, -3, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [1024, 1, 1]], # 37

+

+ [-1, 1, MP, []],

+ [-1, 1, Conv, [512, 1, 1]],

+ [-3, 1, Conv, [512, 1, 1]],

+ [-1, 1, Conv, [512, 3, 2]],

+ [[-1, -3], 1, Concat, [1]], # 42-P5/32

+ [-1, 1, Conv, [256, 1, 1]],

+ [-2, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [[-1, -3, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [1024, 1, 1]], # 50

+ ]

+

+# yolov7 head

+head:

+ [[-1, 1, SPPCSPC, [512]], # 51

+

+ [-1, 1, Conv, [256, 1, 1]],

+ [-1, 1, nn.Upsample, [None, 2, 'nearest']],

+ [37, 1, Conv, [256, 1, 1]], # route backbone P4

+ [[-1, -2], 1, Concat, [1]],

+

+ [-1, 1, Conv, [256, 1, 1]],

+ [-2, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [[-1, -2, -3, -4, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [256, 1, 1]], # 63

+

+ [-1, 1, Conv, [128, 1, 1]],

+ [-1, 1, nn.Upsample, [None, 2, 'nearest']],

+ [24, 1, Conv, [128, 1, 1]], # route backbone P3

+ [[-1, -2], 1, Concat, [1]],

+

+ [-1, 1, Conv, [128, 1, 1]],

+ [-2, 1, Conv, [128, 1, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [-1, 1, Conv, [64, 3, 1]],

+ [[-1, -2, -3, -4, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [128, 1, 1]], # 75

+

+ [-1, 1, MP, []],

+ [-1, 1, Conv, [128, 1, 1]],

+ [-3, 1, Conv, [128, 1, 1]],

+ [-1, 1, Conv, [128, 3, 2]],

+ [[-1, -3, 63], 1, Concat, [1]],

+

+ [-1, 1, Conv, [256, 1, 1]],

+ [-2, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [-1, 1, Conv, [128, 3, 1]],

+ [[-1, -2, -3, -4, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [256, 1, 1]], # 88

+

+ [-1, 1, MP, []],

+ [-1, 1, Conv, [256, 1, 1]],

+ [-3, 1, Conv, [256, 1, 1]],

+ [-1, 1, Conv, [256, 3, 2]],

+ [[-1, -3, 51], 1, Concat, [1]],

+

+ [-1, 1, Conv, [512, 1, 1]],

+ [-2, 1, Conv, [512, 1, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [-1, 1, Conv, [256, 3, 1]],

+ [[-1, -2, -3, -4, -5, -6], 1, Concat, [1]],

+ [-1, 1, Conv, [512, 1, 1]], # 101

+

+ [75, 1, RepConv, [256, 3, 1]],

+ [88, 1, RepConv, [512, 3, 1]],

+ [101, 1, RepConv, [1024, 3, 1]],

+

+ [[102,103,104], 1, IDetect, [nc, anchors]], # Detect(P3, P4, P5)

+ ]

diff --git a/ipex/data/custom_data.yaml b/ipex/data/custom_data.yaml

new file mode 100644

index 000000000..4a4d17740

--- /dev/null

+++ b/ipex/data/custom_data.yaml

@@ -0,0 +1,17 @@

+

+

+

+# train and val data as 1) directory: path/images/, 2) file: path/images.txt, or 3) list: [path1/images/, path2/images/]

+train: ./data/train

+val: ./data/val

+test: ./data/test #

+

+# number of classes

+nc: 1

+

+# class names

+# names: [ 'GRAFFITI', 'FADED SIGNAGE', 'POTHOLES', 'GARBAGE', 'CONSTRUCTION ROAD', 'BROKEN SIGNAGE', 'BAD STREETLIGHT',

+# 'BAD BILLBOARD', 'SAND ON ROAD', 'CLUTTER SIDEWALK', 'UNKEPT FACADE' ]

+

+

+names: [ 'POTHOLES' ]

diff --git a/ipex/data/hyp.scratch.custom.yaml b/ipex/data/hyp.scratch.custom.yaml

new file mode 100644

index 000000000..8570d7301

--- /dev/null

+++ b/ipex/data/hyp.scratch.custom.yaml

@@ -0,0 +1,31 @@

+lr0: 0.01 # initial learning rate (SGD=1E-2, Adam=1E-3)

+lrf: 0.1 # final OneCycleLR learning rate (lr0 * lrf)

+momentum: 0.937 # SGD momentum/Adam beta1

+weight_decay: 0.0005 # optimizer weight decay 5e-4

+warmup_epochs: 3.0 # warmup epochs (fractions ok)

+warmup_momentum: 0.8 # warmup initial momentum

+warmup_bias_lr: 0.1 # warmup initial bias lr

+box: 0.05 # box loss gain

+cls: 0.3 # cls loss gain

+cls_pw: 1.0 # cls BCELoss positive_weight

+obj: 0.7 # obj loss gain (scale with pixels)

+obj_pw: 1.0 # obj BCELoss positive_weight

+iou_t: 0.20 # IoU training threshold

+anchor_t: 4.0 # anchor-multiple threshold

+# anchors: 3 # anchors per output layer (0 to ignore)

+fl_gamma: 0.0 # focal loss gamma (efficientDet default gamma=1.5)

+hsv_h: 0.015 # image HSV-Hue augmentation (fraction)

+hsv_s: 0.7 # image HSV-Saturation augmentation (fraction)

+hsv_v: 0.4 # image HSV-Value augmentation (fraction)

+degrees: 0.0 # image rotation (+/- deg)

+translate: 0.2 # image translation (+/- fraction)

+scale: 0.5 # image scale (+/- gain)

+shear: 0.0 # image shear (+/- deg)

+perspective: 0.0 # image perspective (+/- fraction), range 0-0.001

+flipud: 0.0 # image flip up-down (probability)

+fliplr: 0.5 # image flip left-right (probability)

+mosaic: 1.0 # image mosaic (probability)

+mixup: 0.0 # image mixup (probability)

+copy_paste: 0.0 # image copy paste (probability)

+paste_in: 0.0 # image copy paste (probability), use 0 for faster training

+loss_ota: 1 # use ComputeLossOTA, use 0 for faster training

\ No newline at end of file

diff --git a/ipex/deploy/triton-inference-server/README.md b/ipex/deploy/triton-inference-server/README.md

new file mode 100644

index 000000000..13af4daa9

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/README.md

@@ -0,0 +1,164 @@

+# YOLOv7 on Triton Inference Server

+

+Instructions to deploy YOLOv7 as TensorRT engine to [Triton Inference Server](https://github.com/NVIDIA/triton-inference-server).

+

+Triton Inference Server takes care of model deployment with many out-of-the-box benefits, like a GRPC and HTTP interface, automatic scheduling on multiple GPUs, shared memory (even on GPU), dynamic server-side batching, health metrics and memory resource management.

+

+There are no additional dependencies needed to run this deployment, except a working docker daemon with GPU support.

+

+## Export TensorRT

+

+See https://github.com/WongKinYiu/yolov7#export for more info.

+

+```bash

+#install onnx-simplifier not listed in general yolov7 requirements.txt

+pip3 install onnx-simplifier

+

+# Pytorch Yolov7 -> ONNX with grid, EfficientNMS plugin and dynamic batch size

+python export.py --weights ./yolov7.pt --grid --end2end --dynamic-batch --simplify --topk-all 100 --iou-thres 0.65 --conf-thres 0.35 --img-size 640 640

+# ONNX -> TensorRT with trtexec and docker

+docker run -it --rm --gpus=all nvcr.io/nvidia/tensorrt:22.06-py3

+# Copy onnx -> container: docker cp yolov7.onnx :/workspace/

+# Export with FP16 precision, min batch 1, opt batch 8 and max batch 8

+./tensorrt/bin/trtexec --onnx=yolov7.onnx --minShapes=images:1x3x640x640 --optShapes=images:8x3x640x640 --maxShapes=images:8x3x640x640 --fp16 --workspace=4096 --saveEngine=yolov7-fp16-1x8x8.engine --timingCacheFile=timing.cache

+# Test engine

+./tensorrt/bin/trtexec --loadEngine=yolov7-fp16-1x8x8.engine

+# Copy engine -> host: docker cp :/workspace/yolov7-fp16-1x8x8.engine .

+```

+

+Example output of test with RTX 3090.

+

+```

+[I] === Performance summary ===

+[I] Throughput: 73.4985 qps

+[I] Latency: min = 14.8578 ms, max = 15.8344 ms, mean = 15.07 ms, median = 15.0422 ms, percentile(99%) = 15.7443 ms

+[I] End-to-End Host Latency: min = 25.8715 ms, max = 28.4102 ms, mean = 26.672 ms, median = 26.6082 ms, percentile(99%) = 27.8314 ms

+[I] Enqueue Time: min = 0.793701 ms, max = 1.47144 ms, mean = 1.2008 ms, median = 1.28644 ms, percentile(99%) = 1.38965 ms

+[I] H2D Latency: min = 1.50073 ms, max = 1.52454 ms, mean = 1.51225 ms, median = 1.51404 ms, percentile(99%) = 1.51941 ms

+[I] GPU Compute Time: min = 13.3386 ms, max = 14.3186 ms, mean = 13.5448 ms, median = 13.5178 ms, percentile(99%) = 14.2151 ms

+[I] D2H Latency: min = 0.00878906 ms, max = 0.0172729 ms, mean = 0.0128844 ms, median = 0.0125732 ms, percentile(99%) = 0.0166016 ms

+[I] Total Host Walltime: 3.04768 s

+[I] Total GPU Compute Time: 3.03404 s

+[I] Explanations of the performance metrics are printed in the verbose logs.

+```

+Note: 73.5 qps x batch 8 = 588 fps @ ~15ms latency.

+

+## Model Repository

+

+See [Triton Model Repository Documentation](https://github.com/triton-inference-server/server/blob/main/docs/model_repository.md#model-repository) for more info.

+

+```bash

+# Create folder structure

+mkdir -p triton-deploy/models/yolov7/1/

+touch triton-deploy/models/yolov7/config.pbtxt

+# Place model

+mv yolov7-fp16-1x8x8.engine triton-deploy/models/yolov7/1/model.plan

+```

+

+## Model Configuration

+

+See [Triton Model Configuration Documentation](https://github.com/triton-inference-server/server/blob/main/docs/model_configuration.md#model-configuration) for more info.

+

+Minimal configuration for `triton-deploy/models/yolov7/config.pbtxt`:

+

+```

+name: "yolov7"

+platform: "tensorrt_plan"

+max_batch_size: 8

+dynamic_batching { }

+```

+

+Example repository:

+

+```bash

+$ tree triton-deploy/

+triton-deploy/

+└── models

+ └── yolov7

+ ├── 1

+ │ └── model.plan

+ └── config.pbtxt

+

+3 directories, 2 files

+```

+

+## Start Triton Inference Server

+

+```

+docker run --gpus all --rm --ipc=host --shm-size=1g --ulimit memlock=-1 --ulimit stack=67108864 -p8000:8000 -p8001:8001 -p8002:8002 -v$(pwd)/triton-deploy/models:/models nvcr.io/nvidia/tritonserver:22.06-py3 tritonserver --model-repository=/models --strict-model-config=false --log-verbose 1

+```

+

+In the log you should see:

+

+```

++--------+---------+--------+

+| Model | Version | Status |

++--------+---------+--------+

+| yolov7 | 1 | READY |

++--------+---------+--------+

+```

+

+## Performance with Model Analyzer

+

+See [Triton Model Analyzer Documentation](https://github.com/triton-inference-server/server/blob/main/docs/model_analyzer.md#model-analyzer) for more info.

+

+Performance numbers @ RTX 3090 + AMD Ryzen 9 5950X

+

+Example test for 16 concurrent clients using shared memory, each with batch size 1 requests:

+

+```bash

+docker run -it --ipc=host --net=host nvcr.io/nvidia/tritonserver:22.06-py3-sdk /bin/bash

+

+./install/bin/perf_analyzer -m yolov7 -u 127.0.0.1:8001 -i grpc --shared-memory system --concurrency-range 16

+

+# Result (truncated)

+Concurrency: 16, throughput: 590.119 infer/sec, latency 27080 usec

+```

+

+Throughput for 16 clients with batch size 1 is the same as for a single thread running the engine at 16 batch size locally thanks to Triton [Dynamic Batching Strategy](https://github.com/triton-inference-server/server/blob/main/docs/model_configuration.md#dynamic-batcher). Result without dynamic batching (disable in model configuration) considerably worse:

+

+```bash

+# Result (truncated)

+Concurrency: 16, throughput: 335.587 infer/sec, latency 47616 usec

+```

+

+## How to run model in your code

+

+Example client can be found in client.py. It can run dummy input, images and videos.

+

+```bash

+pip3 install tritonclient[all] opencv-python

+python3 client.py image data/dog.jpg

+```

+

+

+

+```

+$ python3 client.py --help

+usage: client.py [-h] [-m MODEL] [--width WIDTH] [--height HEIGHT] [-u URL] [-o OUT] [-f FPS] [-i] [-v] [-t CLIENT_TIMEOUT] [-s] [-r ROOT_CERTIFICATES] [-p PRIVATE_KEY] [-x CERTIFICATE_CHAIN] {dummy,image,video} [input]

+

+positional arguments:

+ {dummy,image,video} Run mode. 'dummy' will send an emtpy buffer to the server to test if inference works. 'image' will process an image. 'video' will process a video.

+ input Input file to load from in image or video mode

+

+optional arguments:

+ -h, --help show this help message and exit

+ -m MODEL, --model MODEL

+ Inference model name, default yolov7

+ --width WIDTH Inference model input width, default 640

+ --height HEIGHT Inference model input height, default 640

+ -u URL, --url URL Inference server URL, default localhost:8001

+ -o OUT, --out OUT Write output into file instead of displaying it

+ -f FPS, --fps FPS Video output fps, default 24.0 FPS

+ -i, --model-info Print model status, configuration and statistics

+ -v, --verbose Enable verbose client output

+ -t CLIENT_TIMEOUT, --client-timeout CLIENT_TIMEOUT

+ Client timeout in seconds, default no timeout

+ -s, --ssl Enable SSL encrypted channel to the server

+ -r ROOT_CERTIFICATES, --root-certificates ROOT_CERTIFICATES

+ File holding PEM-encoded root certificates, default none

+ -p PRIVATE_KEY, --private-key PRIVATE_KEY

+ File holding PEM-encoded private key, default is none

+ -x CERTIFICATE_CHAIN, --certificate-chain CERTIFICATE_CHAIN

+ File holding PEM-encoded certicate chain default is none

+```

diff --git a/ipex/deploy/triton-inference-server/boundingbox.py b/ipex/deploy/triton-inference-server/boundingbox.py

new file mode 100644

index 000000000..8b95330b8

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/boundingbox.py

@@ -0,0 +1,33 @@

+class BoundingBox:

+ def __init__(self, classID, confidence, x1, x2, y1, y2, image_width, image_height):

+ self.classID = classID

+ self.confidence = confidence

+ self.x1 = x1

+ self.x2 = x2

+ self.y1 = y1

+ self.y2 = y2

+ self.u1 = x1 / image_width

+ self.u2 = x2 / image_width

+ self.v1 = y1 / image_height

+ self.v2 = y2 / image_height

+

+ def box(self):

+ return (self.x1, self.y1, self.x2, self.y2)

+

+ def width(self):

+ return self.x2 - self.x1

+

+ def height(self):

+ return self.y2 - self.y1

+

+ def center_absolute(self):

+ return (0.5 * (self.x1 + self.x2), 0.5 * (self.y1 + self.y2))

+

+ def center_normalized(self):

+ return (0.5 * (self.u1 + self.u2), 0.5 * (self.v1 + self.v2))

+

+ def size_absolute(self):

+ return (self.x2 - self.x1, self.y2 - self.y1)

+

+ def size_normalized(self):

+ return (self.u2 - self.u1, self.v2 - self.v1)

diff --git a/ipex/deploy/triton-inference-server/client.py b/ipex/deploy/triton-inference-server/client.py

new file mode 100644

index 000000000..aedca11c7

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/client.py

@@ -0,0 +1,334 @@

+#!/usr/bin/env python

+

+import argparse

+import numpy as np

+import sys

+import cv2

+

+import tritonclient.grpc as grpcclient

+from tritonclient.utils import InferenceServerException

+

+from processing import preprocess, postprocess

+from render import render_box, render_filled_box, get_text_size, render_text, RAND_COLORS

+from labels import COCOLabels

+

+INPUT_NAMES = ["images"]

+OUTPUT_NAMES = ["num_dets", "det_boxes", "det_scores", "det_classes"]

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('mode',

+ choices=['dummy', 'image', 'video'],

+ default='dummy',

+ help='Run mode. \'dummy\' will send an emtpy buffer to the server to test if inference works. \'image\' will process an image. \'video\' will process a video.')

+ parser.add_argument('input',

+ type=str,

+ nargs='?',

+ help='Input file to load from in image or video mode')

+ parser.add_argument('-m',

+ '--model',

+ type=str,

+ required=False,

+ default='yolov7',

+ help='Inference model name, default yolov7')

+ parser.add_argument('--width',

+ type=int,

+ required=False,

+ default=640,

+ help='Inference model input width, default 640')

+ parser.add_argument('--height',

+ type=int,

+ required=False,

+ default=640,

+ help='Inference model input height, default 640')

+ parser.add_argument('-u',

+ '--url',

+ type=str,

+ required=False,

+ default='localhost:8001',

+ help='Inference server URL, default localhost:8001')

+ parser.add_argument('-o',

+ '--out',

+ type=str,

+ required=False,

+ default='',

+ help='Write output into file instead of displaying it')

+ parser.add_argument('-f',

+ '--fps',

+ type=float,

+ required=False,

+ default=24.0,

+ help='Video output fps, default 24.0 FPS')

+ parser.add_argument('-i',

+ '--model-info',

+ action="store_true",

+ required=False,

+ default=False,

+ help='Print model status, configuration and statistics')

+ parser.add_argument('-v',

+ '--verbose',

+ action="store_true",

+ required=False,

+ default=False,

+ help='Enable verbose client output')

+ parser.add_argument('-t',

+ '--client-timeout',

+ type=float,

+ required=False,

+ default=None,

+ help='Client timeout in seconds, default no timeout')

+ parser.add_argument('-s',

+ '--ssl',

+ action="store_true",

+ required=False,

+ default=False,

+ help='Enable SSL encrypted channel to the server')

+ parser.add_argument('-r',

+ '--root-certificates',

+ type=str,

+ required=False,

+ default=None,

+ help='File holding PEM-encoded root certificates, default none')

+ parser.add_argument('-p',

+ '--private-key',

+ type=str,

+ required=False,

+ default=None,

+ help='File holding PEM-encoded private key, default is none')

+ parser.add_argument('-x',

+ '--certificate-chain',

+ type=str,

+ required=False,

+ default=None,

+ help='File holding PEM-encoded certicate chain default is none')

+

+ FLAGS = parser.parse_args()

+

+ # Create server context

+ try:

+ triton_client = grpcclient.InferenceServerClient(

+ url=FLAGS.url,

+ verbose=FLAGS.verbose,

+ ssl=FLAGS.ssl,

+ root_certificates=FLAGS.root_certificates,

+ private_key=FLAGS.private_key,

+ certificate_chain=FLAGS.certificate_chain)

+ except Exception as e:

+ print("context creation failed: " + str(e))

+ sys.exit()

+

+ # Health check

+ if not triton_client.is_server_live():

+ print("FAILED : is_server_live")

+ sys.exit(1)

+

+ if not triton_client.is_server_ready():

+ print("FAILED : is_server_ready")

+ sys.exit(1)

+

+ if not triton_client.is_model_ready(FLAGS.model):

+ print("FAILED : is_model_ready")

+ sys.exit(1)

+

+ if FLAGS.model_info:

+ # Model metadata

+ try:

+ metadata = triton_client.get_model_metadata(FLAGS.model)

+ print(metadata)

+ except InferenceServerException as ex:

+ if "Request for unknown model" not in ex.message():

+ print("FAILED : get_model_metadata")

+ print("Got: {}".format(ex.message()))

+ sys.exit(1)

+ else:

+ print("FAILED : get_model_metadata")

+ sys.exit(1)

+

+ # Model configuration

+ try:

+ config = triton_client.get_model_config(FLAGS.model)

+ if not (config.config.name == FLAGS.model):

+ print("FAILED: get_model_config")

+ sys.exit(1)

+ print(config)

+ except InferenceServerException as ex:

+ print("FAILED : get_model_config")

+ print("Got: {}".format(ex.message()))

+ sys.exit(1)

+

+ # DUMMY MODE

+ if FLAGS.mode == 'dummy':

+ print("Running in 'dummy' mode")

+ print("Creating emtpy buffer filled with ones...")

+ inputs = []

+ outputs = []

+ inputs.append(grpcclient.InferInput(INPUT_NAMES[0], [1, 3, FLAGS.width, FLAGS.height], "FP32"))

+ inputs[0].set_data_from_numpy(np.ones(shape=(1, 3, FLAGS.width, FLAGS.height), dtype=np.float32))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[0]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[1]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[2]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[3]))

+

+ print("Invoking inference...")

+ results = triton_client.infer(model_name=FLAGS.model,

+ inputs=inputs,

+ outputs=outputs,

+ client_timeout=FLAGS.client_timeout)

+ if FLAGS.model_info:

+ statistics = triton_client.get_inference_statistics(model_name=FLAGS.model)

+ if len(statistics.model_stats) != 1:

+ print("FAILED: get_inference_statistics")

+ sys.exit(1)

+ print(statistics)

+ print("Done")

+

+ for output in OUTPUT_NAMES:

+ result = results.as_numpy(output)

+ print(f"Received result buffer \"{output}\" of size {result.shape}")

+ print(f"Naive buffer sum: {np.sum(result)}")

+

+ # IMAGE MODE

+ if FLAGS.mode == 'image':

+ print("Running in 'image' mode")

+ if not FLAGS.input:

+ print("FAILED: no input image")

+ sys.exit(1)

+

+ inputs = []

+ outputs = []

+ inputs.append(grpcclient.InferInput(INPUT_NAMES[0], [1, 3, FLAGS.width, FLAGS.height], "FP32"))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[0]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[1]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[2]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[3]))

+

+ print("Creating buffer from image file...")

+ input_image = cv2.imread(str(FLAGS.input))

+ if input_image is None:

+ print(f"FAILED: could not load input image {str(FLAGS.input)}")

+ sys.exit(1)

+ input_image_buffer = preprocess(input_image, [FLAGS.width, FLAGS.height])

+ input_image_buffer = np.expand_dims(input_image_buffer, axis=0)

+

+ inputs[0].set_data_from_numpy(input_image_buffer)

+

+ print("Invoking inference...")

+ results = triton_client.infer(model_name=FLAGS.model,

+ inputs=inputs,

+ outputs=outputs,

+ client_timeout=FLAGS.client_timeout)

+ if FLAGS.model_info:

+ statistics = triton_client.get_inference_statistics(model_name=FLAGS.model)

+ if len(statistics.model_stats) != 1:

+ print("FAILED: get_inference_statistics")

+ sys.exit(1)

+ print(statistics)

+ print("Done")

+

+ for output in OUTPUT_NAMES:

+ result = results.as_numpy(output)

+ print(f"Received result buffer \"{output}\" of size {result.shape}")

+ print(f"Naive buffer sum: {np.sum(result)}")

+

+ num_dets = results.as_numpy(OUTPUT_NAMES[0])

+ det_boxes = results.as_numpy(OUTPUT_NAMES[1])

+ det_scores = results.as_numpy(OUTPUT_NAMES[2])

+ det_classes = results.as_numpy(OUTPUT_NAMES[3])

+ detected_objects = postprocess(num_dets, det_boxes, det_scores, det_classes, input_image.shape[1], input_image.shape[0], [FLAGS.width, FLAGS.height])

+ print(f"Detected objects: {len(detected_objects)}")

+

+ for box in detected_objects:

+ print(f"{COCOLabels(box.classID).name}: {box.confidence}")

+ input_image = render_box(input_image, box.box(), color=tuple(RAND_COLORS[box.classID % 64].tolist()))

+ size = get_text_size(input_image, f"{COCOLabels(box.classID).name}: {box.confidence:.2f}", normalised_scaling=0.6)

+ input_image = render_filled_box(input_image, (box.x1 - 3, box.y1 - 3, box.x1 + size[0], box.y1 + size[1]), color=(220, 220, 220))

+ input_image = render_text(input_image, f"{COCOLabels(box.classID).name}: {box.confidence:.2f}", (box.x1, box.y1), color=(30, 30, 30), normalised_scaling=0.5)

+

+ if FLAGS.out:

+ cv2.imwrite(FLAGS.out, input_image)

+ print(f"Saved result to {FLAGS.out}")

+ else:

+ cv2.imshow('image', input_image)

+ cv2.waitKey(0)

+ cv2.destroyAllWindows()

+

+ # VIDEO MODE

+ if FLAGS.mode == 'video':

+ print("Running in 'video' mode")

+ if not FLAGS.input:

+ print("FAILED: no input video")

+ sys.exit(1)

+

+ inputs = []

+ outputs = []

+ inputs.append(grpcclient.InferInput(INPUT_NAMES[0], [1, 3, FLAGS.width, FLAGS.height], "FP32"))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[0]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[1]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[2]))

+ outputs.append(grpcclient.InferRequestedOutput(OUTPUT_NAMES[3]))

+

+ print("Opening input video stream...")

+ cap = cv2.VideoCapture(FLAGS.input)

+ if not cap.isOpened():

+ print(f"FAILED: cannot open video {FLAGS.input}")

+ sys.exit(1)

+

+ counter = 0

+ out = None

+ print("Invoking inference...")

+ while True:

+ ret, frame = cap.read()

+ if not ret:

+ print("failed to fetch next frame")

+ break

+

+ if counter == 0 and FLAGS.out:

+ print("Opening output video stream...")

+ fourcc = cv2.VideoWriter_fourcc('M', 'P', '4', 'V')

+ out = cv2.VideoWriter(FLAGS.out, fourcc, FLAGS.fps, (frame.shape[1], frame.shape[0]))

+

+ input_image_buffer = preprocess(frame, [FLAGS.width, FLAGS.height])

+ input_image_buffer = np.expand_dims(input_image_buffer, axis=0)

+

+ inputs[0].set_data_from_numpy(input_image_buffer)

+

+ results = triton_client.infer(model_name=FLAGS.model,

+ inputs=inputs,

+ outputs=outputs,

+ client_timeout=FLAGS.client_timeout)

+

+ num_dets = results.as_numpy("num_dets")

+ det_boxes = results.as_numpy("det_boxes")

+ det_scores = results.as_numpy("det_scores")

+ det_classes = results.as_numpy("det_classes")

+ detected_objects = postprocess(num_dets, det_boxes, det_scores, det_classes, frame.shape[1], frame.shape[0], [FLAGS.width, FLAGS.height])

+ print(f"Frame {counter}: {len(detected_objects)} objects")

+ counter += 1

+

+ for box in detected_objects:

+ print(f"{COCOLabels(box.classID).name}: {box.confidence}")

+ frame = render_box(frame, box.box(), color=tuple(RAND_COLORS[box.classID % 64].tolist()))

+ size = get_text_size(frame, f"{COCOLabels(box.classID).name}: {box.confidence:.2f}", normalised_scaling=0.6)

+ frame = render_filled_box(frame, (box.x1 - 3, box.y1 - 3, box.x1 + size[0], box.y1 + size[1]), color=(220, 220, 220))

+ frame = render_text(frame, f"{COCOLabels(box.classID).name}: {box.confidence:.2f}", (box.x1, box.y1), color=(30, 30, 30), normalised_scaling=0.5)

+

+ if FLAGS.out:

+ out.write(frame)

+ else:

+ cv2.imshow('image', frame)

+ if cv2.waitKey(1) == ord('q'):

+ break

+

+ if FLAGS.model_info:

+ statistics = triton_client.get_inference_statistics(model_name=FLAGS.model)

+ if len(statistics.model_stats) != 1:

+ print("FAILED: get_inference_statistics")

+ sys.exit(1)

+ print(statistics)

+ print("Done")

+

+ cap.release()

+ if FLAGS.out:

+ out.release()

+ else:

+ cv2.destroyAllWindows()

diff --git a/ipex/deploy/triton-inference-server/labels.py b/ipex/deploy/triton-inference-server/labels.py

new file mode 100644

index 000000000..ba6c5c516

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/labels.py

@@ -0,0 +1,83 @@

+from enum import Enum

+

+class COCOLabels(Enum):

+ PERSON = 0

+ BICYCLE = 1

+ CAR = 2

+ MOTORBIKE = 3

+ AEROPLANE = 4

+ BUS = 5

+ TRAIN = 6

+ TRUCK = 7

+ BOAT = 8

+ TRAFFIC_LIGHT = 9

+ FIRE_HYDRANT = 10

+ STOP_SIGN = 11

+ PARKING_METER = 12

+ BENCH = 13

+ BIRD = 14

+ CAT = 15

+ DOG = 16

+ HORSE = 17

+ SHEEP = 18

+ COW = 19

+ ELEPHANT = 20

+ BEAR = 21

+ ZEBRA = 22

+ GIRAFFE = 23

+ BACKPACK = 24

+ UMBRELLA = 25

+ HANDBAG = 26

+ TIE = 27

+ SUITCASE = 28

+ FRISBEE = 29

+ SKIS = 30

+ SNOWBOARD = 31

+ SPORTS_BALL = 32

+ KITE = 33

+ BASEBALL_BAT = 34

+ BASEBALL_GLOVE = 35

+ SKATEBOARD = 36

+ SURFBOARD = 37

+ TENNIS_RACKET = 38

+ BOTTLE = 39

+ WINE_GLASS = 40

+ CUP = 41

+ FORK = 42

+ KNIFE = 43

+ SPOON = 44

+ BOWL = 45

+ BANANA = 46

+ APPLE = 47

+ SANDWICH = 48

+ ORANGE = 49

+ BROCCOLI = 50

+ CARROT = 51

+ HOT_DOG = 52

+ PIZZA = 53

+ DONUT = 54

+ CAKE = 55

+ CHAIR = 56

+ SOFA = 57

+ POTTEDPLANT = 58

+ BED = 59

+ DININGTABLE = 60

+ TOILET = 61

+ TVMONITOR = 62

+ LAPTOP = 63

+ MOUSE = 64

+ REMOTE = 65

+ KEYBOARD = 66

+ CELL_PHONE = 67

+ MICROWAVE = 68

+ OVEN = 69

+ TOASTER = 70

+ SINK = 71

+ REFRIGERATOR = 72

+ BOOK = 73

+ CLOCK = 74

+ VASE = 75

+ SCISSORS = 76

+ TEDDY_BEAR = 77

+ HAIR_DRIER = 78

+ TOOTHBRUSH = 79

diff --git a/ipex/deploy/triton-inference-server/processing.py b/ipex/deploy/triton-inference-server/processing.py

new file mode 100644

index 000000000..3d51c50a3

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/processing.py

@@ -0,0 +1,51 @@

+from boundingbox import BoundingBox

+

+import cv2

+import numpy as np

+

+def preprocess(img, input_shape, letter_box=True):

+ if letter_box:

+ img_h, img_w, _ = img.shape

+ new_h, new_w = input_shape[0], input_shape[1]

+ offset_h, offset_w = 0, 0

+ if (new_w / img_w) <= (new_h / img_h):

+ new_h = int(img_h * new_w / img_w)

+ offset_h = (input_shape[0] - new_h) // 2

+ else:

+ new_w = int(img_w * new_h / img_h)

+ offset_w = (input_shape[1] - new_w) // 2

+ resized = cv2.resize(img, (new_w, new_h))

+ img = np.full((input_shape[0], input_shape[1], 3), 127, dtype=np.uint8)

+ img[offset_h:(offset_h + new_h), offset_w:(offset_w + new_w), :] = resized

+ else:

+ img = cv2.resize(img, (input_shape[1], input_shape[0]))

+

+ img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

+ img = img.transpose((2, 0, 1)).astype(np.float32)

+ img /= 255.0

+ return img

+

+def postprocess(num_dets, det_boxes, det_scores, det_classes, img_w, img_h, input_shape, letter_box=True):

+ boxes = det_boxes[0, :num_dets[0][0]] / np.array([input_shape[0], input_shape[1], input_shape[0], input_shape[1]], dtype=np.float32)

+ scores = det_scores[0, :num_dets[0][0]]

+ classes = det_classes[0, :num_dets[0][0]].astype(np.int)

+

+ old_h, old_w = img_h, img_w

+ offset_h, offset_w = 0, 0

+ if letter_box:

+ if (img_w / input_shape[1]) >= (img_h / input_shape[0]):

+ old_h = int(input_shape[0] * img_w / input_shape[1])

+ offset_h = (old_h - img_h) // 2

+ else:

+ old_w = int(input_shape[1] * img_h / input_shape[0])

+ offset_w = (old_w - img_w) // 2

+

+ boxes = boxes * np.array([old_w, old_h, old_w, old_h], dtype=np.float32)

+ if letter_box:

+ boxes -= np.array([offset_w, offset_h, offset_w, offset_h], dtype=np.float32)

+ boxes = boxes.astype(np.int)

+

+ detected_objects = []

+ for box, score, label in zip(boxes, scores, classes):

+ detected_objects.append(BoundingBox(label, score, box[0], box[2], box[1], box[3], img_w, img_h))

+ return detected_objects

diff --git a/ipex/deploy/triton-inference-server/render.py b/ipex/deploy/triton-inference-server/render.py

new file mode 100644

index 000000000..dea040156

--- /dev/null

+++ b/ipex/deploy/triton-inference-server/render.py

@@ -0,0 +1,110 @@

+import numpy as np

+

+import cv2

+

+from math import sqrt

+

+_LINE_THICKNESS_SCALING = 500.0

+

+np.random.seed(0)

+RAND_COLORS = np.random.randint(50, 255, (64, 3), "int") # used for class visu

+RAND_COLORS[0] = [220, 220, 220]

+

+def render_box(img, box, color=(200, 200, 200)):

+ """

+ Render a box. Calculates scaling and thickness automatically.

+ :param img: image to render into

+ :param box: (x1, y1, x2, y2) - box coordinates

+ :param color: (b, g, r) - box color

+ :return: updated image

+ """

+ x1, y1, x2, y2 = box

+ thickness = int(

+ round(

+ (img.shape[0] * img.shape[1])

+ / (_LINE_THICKNESS_SCALING * _LINE_THICKNESS_SCALING)

+ )

+ )

+ thickness = max(1, thickness)

+ img = cv2.rectangle(

+ img,

+ (int(x1), int(y1)),

+ (int(x2), int(y2)),

+ color,

+ thickness=thickness

+ )

+ return img

+

+def render_filled_box(img, box, color=(200, 200, 200)):

+ """

+ Render a box. Calculates scaling and thickness automatically.

+ :param img: image to render into

+ :param box: (x1, y1, x2, y2) - box coordinates

+ :param color: (b, g, r) - box color

+ :return: updated image

+ """

+ x1, y1, x2, y2 = box

+ img = cv2.rectangle(

+ img,

+ (int(x1), int(y1)),

+ (int(x2), int(y2)),

+ color,

+ thickness=cv2.FILLED

+ )

+ return img

+

+_TEXT_THICKNESS_SCALING = 700.0

+_TEXT_SCALING = 520.0

+

+

+def get_text_size(img, text, normalised_scaling=1.0):

+ """

+ Get calculated text size (as box width and height)

+ :param img: image reference, used to determine appropriate text scaling

+ :param text: text to display

+ :param normalised_scaling: additional normalised scaling. Default 1.0.

+ :return: (width, height) - width and height of text box

+ """

+ thickness = int(

+ round(

+ (img.shape[0] * img.shape[1])

+ / (_TEXT_THICKNESS_SCALING * _TEXT_THICKNESS_SCALING)

+ )

+ * normalised_scaling

+ )

+ thickness = max(1, thickness)

+ scaling = img.shape[0] / _TEXT_SCALING * normalised_scaling

+ return cv2.getTextSize(text, cv2.FONT_HERSHEY_SIMPLEX, scaling, thickness)[0]

+

+

+def render_text(img, text, pos, color=(200, 200, 200), normalised_scaling=1.0):

+ """

+ Render a text into the image. Calculates scaling and thickness automatically.

+ :param img: image to render into

+ :param text: text to display

+ :param pos: (x, y) - upper left coordinates of render position

+ :param color: (b, g, r) - text color

+ :param normalised_scaling: additional normalised scaling. Default 1.0.

+ :return: updated image

+ """

+ x, y = pos

+ thickness = int(

+ round(

+ (img.shape[0] * img.shape[1])

+ / (_TEXT_THICKNESS_SCALING * _TEXT_THICKNESS_SCALING)

+ )

+ * normalised_scaling

+ )

+ thickness = max(1, thickness)

+ scaling = img.shape[0] / _TEXT_SCALING * normalised_scaling

+ size = get_text_size(img, text, normalised_scaling)

+ cv2.putText(

+ img,

+ text,

+ (int(x), int(y + size[1])),

+ cv2.FONT_HERSHEY_SIMPLEX,

+ scaling,

+ color,

+ thickness=thickness,

+ )

+ return img

diff --git a/ipex/detect.py b/ipex/detect.py

new file mode 100644

index 000000000..5e0c4416a

--- /dev/null

+++ b/ipex/detect.py

@@ -0,0 +1,196 @@

+import argparse

+import time

+from pathlib import Path

+

+import cv2

+import torch

+import torch.backends.cudnn as cudnn

+from numpy import random

+

+from models.experimental import attempt_load

+from utils.datasets import LoadStreams, LoadImages

+from utils.general import check_img_size, check_requirements, check_imshow, non_max_suppression, apply_classifier, \

+ scale_coords, xyxy2xywh, strip_optimizer, set_logging, increment_path

+from utils.plots import plot_one_box

+from utils.torch_utils import select_device, load_classifier, time_synchronized, TracedModel

+

+

+def detect(save_img=False):

+ source, weights, view_img, save_txt, imgsz, trace = opt.source, opt.weights, opt.view_img, opt.save_txt, opt.img_size, not opt.no_trace

+ save_img = not opt.nosave and not source.endswith('.txt') # save inference images

+ webcam = source.isnumeric() or source.endswith('.txt') or source.lower().startswith(

+ ('rtsp://', 'rtmp://', 'http://', 'https://'))

+

+ # Directories

+ save_dir = Path(increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok)) # increment run

+ (save_dir / 'labels' if save_txt else save_dir).mkdir(parents=True, exist_ok=True) # make dir

+

+ # Initialize

+ set_logging()

+ device = select_device(opt.device)

+ half = device.type != 'cpu' # half precision only supported on CUDA

+

+ # Load model

+ model = attempt_load(weights, map_location=device) # load FP32 model

+ stride = int(model.stride.max()) # model stride

+ imgsz = check_img_size(imgsz, s=stride) # check img_size

+

+ if trace:

+ model = TracedModel(model, device, opt.img_size)

+

+ if half:

+ model.half() # to FP16

+

+ # Second-stage classifier

+ classify = False

+ if classify:

+ modelc = load_classifier(name='resnet101', n=2) # initialize

+ modelc.load_state_dict(torch.load('weights/resnet101.pt', map_location=device)['model']).to(device).eval()

+

+ # Set Dataloader

+ vid_path, vid_writer = None, None

+ if webcam:

+ view_img = check_imshow()

+ cudnn.benchmark = True # set True to speed up constant image size inference

+ dataset = LoadStreams(source, img_size=imgsz, stride=stride)

+ else:

+ dataset = LoadImages(source, img_size=imgsz, stride=stride)

+

+ # Get names and colors

+ names = model.module.names if hasattr(model, 'module') else model.names

+ colors = [[random.randint(0, 255) for _ in range(3)] for _ in names]

+

+ # Run inference

+ if device.type != 'cpu':

+ model(torch.zeros(1, 3, imgsz, imgsz).to(device).type_as(next(model.parameters()))) # run once

+ old_img_w = old_img_h = imgsz

+ old_img_b = 1

+

+ t0 = time.time()

+ for path, img, im0s, vid_cap in dataset:

+ img = torch.from_numpy(img).to(device)

+ img = img.half() if half else img.float() # uint8 to fp16/32

+ img /= 255.0 # 0 - 255 to 0.0 - 1.0

+ if img.ndimension() == 3:

+ img = img.unsqueeze(0)

+

+ # Warmup

+ if device.type != 'cpu' and (old_img_b != img.shape[0] or old_img_h != img.shape[2] or old_img_w != img.shape[3]):

+ old_img_b = img.shape[0]

+ old_img_h = img.shape[2]

+ old_img_w = img.shape[3]

+ for i in range(3):

+ model(img, augment=opt.augment)[0]

+

+ # Inference

+ t1 = time_synchronized()

+ with torch.no_grad(): # Calculating gradients would cause a GPU memory leak

+ pred = model(img, augment=opt.augment)[0]

+ t2 = time_synchronized()

+

+ # Apply NMS

+ pred = non_max_suppression(pred, opt.conf_thres, opt.iou_thres, classes=opt.classes, agnostic=opt.agnostic_nms)

+ t3 = time_synchronized()

+

+ # Apply Classifier

+ if classify:

+ pred = apply_classifier(pred, modelc, img, im0s)

+

+ # Process detections

+ for i, det in enumerate(pred): # detections per image

+ if webcam: # batch_size >= 1

+ p, s, im0, frame = path[i], '%g: ' % i, im0s[i].copy(), dataset.count

+ else:

+ p, s, im0, frame = path, '', im0s, getattr(dataset, 'frame', 0)

+

+ p = Path(p) # to Path

+ save_path = str(save_dir / p.name) # img.jpg

+ txt_path = str(save_dir / 'labels' / p.stem) + ('' if dataset.mode == 'image' else f'_{frame}') # img.txt

+ gn = torch.tensor(im0.shape)[[1, 0, 1, 0]] # normalization gain whwh

+ if len(det):

+ # Rescale boxes from img_size to im0 size

+ det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

+

+ # Print results

+ for c in det[:, -1].unique():

+ n = (det[:, -1] == c).sum() # detections per class

+ s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string

+

+ # Write results

+ for *xyxy, conf, cls in reversed(det):

+ if save_txt: # Write to file

+ xywh = (xyxy2xywh(torch.tensor(xyxy).view(1, 4)) / gn).view(-1).tolist() # normalized xywh

+ line = (cls, *xywh, conf) if opt.save_conf else (cls, *xywh) # label format

+ with open(txt_path + '.txt', 'a') as f:

+ f.write(('%g ' * len(line)).rstrip() % line + '\n')

+

+ if save_img or view_img: # Add bbox to image

+ label = f'{names[int(cls)]} {conf:.2f}'

+ plot_one_box(xyxy, im0, label=label, color=colors[int(cls)], line_thickness=1)

+

+ # Print time (inference + NMS)

+ print(f'{s}Done. ({(1E3 * (t2 - t1)):.1f}ms) Inference, ({(1E3 * (t3 - t2)):.1f}ms) NMS')

+

+ # Stream results

+ if view_img:

+ cv2.imshow(str(p), im0)

+ cv2.waitKey(1) # 1 millisecond

+

+ # Save results (image with detections)

+ if save_img:

+ if dataset.mode == 'image':

+ cv2.imwrite(save_path, im0)

+ print(f" The image with the result is saved in: {save_path}")

+ else: # 'video' or 'stream'

+ if vid_path != save_path: # new video

+ vid_path = save_path

+ if isinstance(vid_writer, cv2.VideoWriter):

+ vid_writer.release() # release previous video writer

+ if vid_cap: # video

+ fps = vid_cap.get(cv2.CAP_PROP_FPS)

+ w = int(vid_cap.get(cv2.CAP_PROP_FRAME_WIDTH))

+ h = int(vid_cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

+ else: # stream

+ fps, w, h = 30, im0.shape[1], im0.shape[0]

+ save_path += '.mp4'

+ vid_writer = cv2.VideoWriter(save_path, cv2.VideoWriter_fourcc(*'mp4v'), fps, (w, h))

+ vid_writer.write(im0)

+

+ if save_txt or save_img:

+ s = f"\n{len(list(save_dir.glob('labels/*.txt')))} labels saved to {save_dir / 'labels'}" if save_txt else ''

+ #print(f"Results saved to {save_dir}{s}")

+

+ print(f'Done. ({time.time() - t0:.3f}s)')

+

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('--weights', nargs='+', type=str, default='yolov7.pt', help='model.pt path(s)')

+ parser.add_argument('--source', type=str, default='inference/images', help='source') # file/folder, 0 for webcam

+ parser.add_argument('--img-size', type=int, default=640, help='inference size (pixels)')

+ parser.add_argument('--conf-thres', type=float, default=0.25, help='object confidence threshold')

+ parser.add_argument('--iou-thres', type=float, default=0.45, help='IOU threshold for NMS')

+ parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

+ parser.add_argument('--view-img', action='store_true', help='display results')

+ parser.add_argument('--save-txt', action='store_true', help='save results to *.txt')

+ parser.add_argument('--save-conf', action='store_true', help='save confidences in --save-txt labels')

+ parser.add_argument('--nosave', action='store_true', help='do not save images/videos')

+ parser.add_argument('--classes', nargs='+', type=int, help='filter by class: --class 0, or --class 0 2 3')

+ parser.add_argument('--agnostic-nms', action='store_true', help='class-agnostic NMS')

+ parser.add_argument('--augment', action='store_true', help='augmented inference')

+ parser.add_argument('--update', action='store_true', help='update all models')

+ parser.add_argument('--project', default='runs/detect', help='save results to project/name')

+ parser.add_argument('--name', default='exp', help='save results to project/name')

+ parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

+ parser.add_argument('--no-trace', action='store_true', help='don`t trace model')

+ opt = parser.parse_args()

+ print(opt)

+ #check_requirements(exclude=('pycocotools', 'thop'))

+

+ with torch.no_grad():

+ if opt.update: # update all models (to fix SourceChangeWarning)

+ for opt.weights in ['yolov7.pt']:

+ detect()

+ strip_optimizer(opt.weights)

+ else:

+ detect()

diff --git a/ipex/export.py b/ipex/export.py

new file mode 100644

index 000000000..cf918aa42

--- /dev/null

+++ b/ipex/export.py

@@ -0,0 +1,205 @@

+import argparse

+import sys

+import time

+import warnings

+

+sys.path.append('./') # to run '$ python *.py' files in subdirectories

+

+import torch

+import torch.nn as nn

+from torch.utils.mobile_optimizer import optimize_for_mobile

+

+import models

+from models.experimental import attempt_load, End2End

+from utils.activations import Hardswish, SiLU

+from utils.general import set_logging, check_img_size

+from utils.torch_utils import select_device

+from utils.add_nms import RegisterNMS

+

+if __name__ == '__main__':

+ parser = argparse.ArgumentParser()

+ parser.add_argument('--weights', type=str, default='./yolor-csp-c.pt', help='weights path')

+ parser.add_argument('--img-size', nargs='+', type=int, default=[640, 640], help='image size') # height, width

+ parser.add_argument('--batch-size', type=int, default=1, help='batch size')

+ parser.add_argument('--dynamic', action='store_true', help='dynamic ONNX axes')

+ parser.add_argument('--dynamic-batch', action='store_true', help='dynamic batch onnx for tensorrt and onnx-runtime')

+ parser.add_argument('--grid', action='store_true', help='export Detect() layer grid')

+ parser.add_argument('--end2end', action='store_true', help='export end2end onnx')

+ parser.add_argument('--max-wh', type=int, default=None, help='None for tensorrt nms, int value for onnx-runtime nms')

+ parser.add_argument('--topk-all', type=int, default=100, help='topk objects for every images')

+ parser.add_argument('--iou-thres', type=float, default=0.45, help='iou threshold for NMS')

+ parser.add_argument('--conf-thres', type=float, default=0.25, help='conf threshold for NMS')

+ parser.add_argument('--device', default='cpu', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

+ parser.add_argument('--simplify', action='store_true', help='simplify onnx model')

+ parser.add_argument('--include-nms', action='store_true', help='export end2end onnx')

+ parser.add_argument('--fp16', action='store_true', help='CoreML FP16 half-precision export')

+ parser.add_argument('--int8', action='store_true', help='CoreML INT8 quantization')

+ opt = parser.parse_args()

+ opt.img_size *= 2 if len(opt.img_size) == 1 else 1 # expand

+ opt.dynamic = opt.dynamic and not opt.end2end

+ opt.dynamic = False if opt.dynamic_batch else opt.dynamic

+ print(opt)

+ set_logging()

+ t = time.time()

+

+ # Load PyTorch model

+ device = select_device(opt.device)

+ model = attempt_load(opt.weights, map_location=device) # load FP32 model

+ labels = model.names

+

+ # Checks

+ gs = int(max(model.stride)) # grid size (max stride)

+ opt.img_size = [check_img_size(x, gs) for x in opt.img_size] # verify img_size are gs-multiples

+

+ # Input

+ img = torch.zeros(opt.batch_size, 3, *opt.img_size).to(device) # image size(1,3,320,192) iDetection

+

+ # Update model

+ for k, m in model.named_modules():

+ m._non_persistent_buffers_set = set() # pytorch 1.6.0 compatibility

+ if isinstance(m, models.common.Conv): # assign export-friendly activations

+ if isinstance(m.act, nn.Hardswish):

+ m.act = Hardswish()

+ elif isinstance(m.act, nn.SiLU):

+ m.act = SiLU()

+ # elif isinstance(m, models.yolo.Detect):

+ # m.forward = m.forward_export # assign forward (optional)

+ model.model[-1].export = not opt.grid # set Detect() layer grid export

+ y = model(img) # dry run

+ if opt.include_nms:

+ model.model[-1].include_nms = True

+ y = None

+

+ # TorchScript export

+ try:

+ print('\nStarting TorchScript export with torch %s...' % torch.__version__)

+ f = opt.weights.replace('.pt', '.torchscript.pt') # filename

+ ts = torch.jit.trace(model, img, strict=False)

+ ts.save(f)

+ print('TorchScript export success, saved as %s' % f)

+ except Exception as e:

+ print('TorchScript export failure: %s' % e)

+

+ # CoreML export

+ try:

+ import coremltools as ct

+

+ print('\nStarting CoreML export with coremltools %s...' % ct.__version__)

+ # convert model from torchscript and apply pixel scaling as per detect.py

+ ct_model = ct.convert(ts, inputs=[ct.ImageType('image', shape=img.shape, scale=1 / 255.0, bias=[0, 0, 0])])

+ bits, mode = (8, 'kmeans_lut') if opt.int8 else (16, 'linear') if opt.fp16 else (32, None)

+ if bits < 32:

+ if sys.platform.lower() == 'darwin': # quantization only supported on macOS

+ with warnings.catch_warnings():

+ warnings.filterwarnings("ignore", category=DeprecationWarning) # suppress numpy==1.20 float warning

+ ct_model = ct.models.neural_network.quantization_utils.quantize_weights(ct_model, bits, mode)

+ else:

+ print('quantization only supported on macOS, skipping...')

+

+ f = opt.weights.replace('.pt', '.mlmodel') # filename

+ ct_model.save(f)

+ print('CoreML export success, saved as %s' % f)

+ except Exception as e:

+ print('CoreML export failure: %s' % e)

+

+ # TorchScript-Lite export

+ try:

+ print('\nStarting TorchScript-Lite export with torch %s...' % torch.__version__)

+ f = opt.weights.replace('.pt', '.torchscript.ptl') # filename

+ tsl = torch.jit.trace(model, img, strict=False)

+ tsl = optimize_for_mobile(tsl)

+ tsl._save_for_lite_interpreter(f)