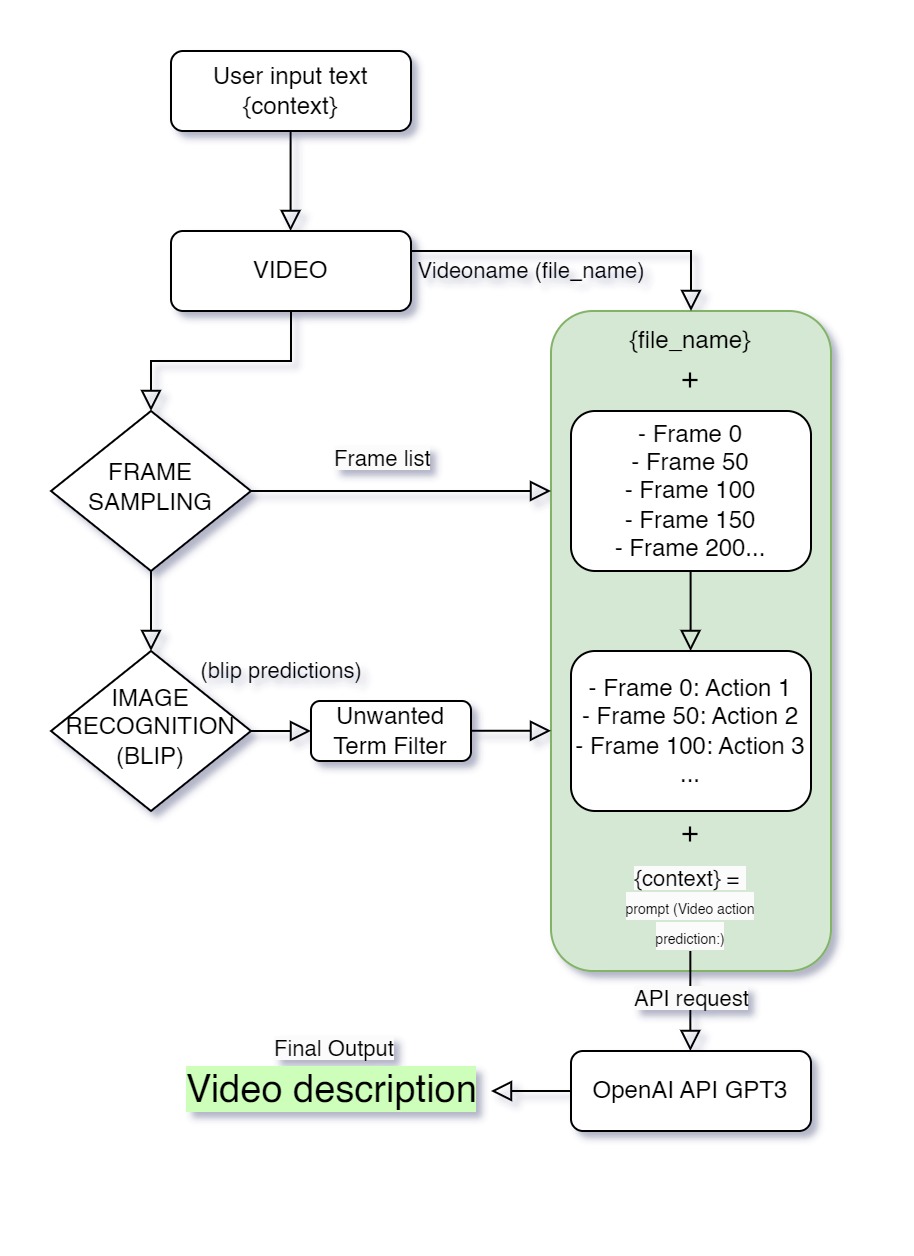

Video Action Recognition using Blip and GPT-3

VideoBlip is a script that generates natural language descriptions from videos. It does so by extracting frames from a video, passing them through the Blip recognition model, which identifies the contents of those frames, and sending those predictions to GPT-3 to generate a text description of what is happening in the video. The script allows you to upload as many files as you want at once. It could be used as a natural language metadata generator or to generate huge datasets of videos aligned with a description. But the core function of the script is to convert video content into text descriptions.

- First, make a copy of this notebook to replace the API key in the code.

- Go to File, Save a copy in Drive.

- Change

YOUR_API_KEYto your actual OpenAI API key. - Run the script cell by cell and wait for the processing to finish.

The script requires a machine with a GPU for optimal performance. If you don't have a GPU, you can still run the script, but it may take significantly longer to complete.

Check out an example of the script in action here.

We're excited to have you contribute! Feel free to fork the repository, add new features, and submit pull requests. Let's make VideoBlip better together!

This project is licensed under the MIT license.